Walk-Forward vs. Backtesting: Key Differences

Compare backtesting and walk-forward analysis: speed vs realism, overfitting risks, and when to use each for betting strategies.

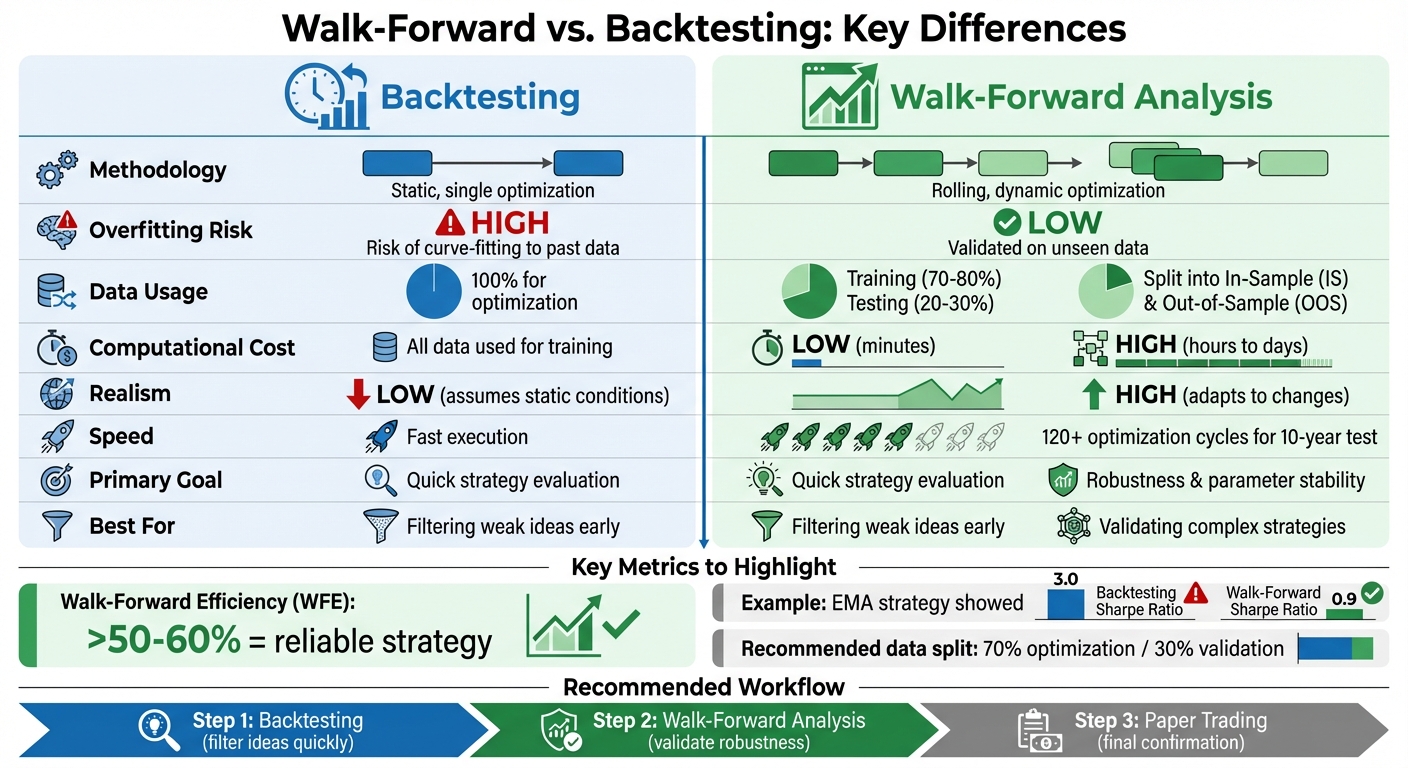

When testing betting strategies, you have two primary approaches: backtesting and walk-forward analysis. Backtesting uses historical data to evaluate a strategy’s past performance, while walk-forward analysis simulates real-time adjustments by testing on unseen data segments. Here’s the key takeaway: backtesting is faster and simpler but risks overfitting, whereas walk-forward analysis is more realistic for evolving markets but requires more time and computational effort.

Key Points:

- Backtesting: Static, uses all historical data at once, fast but prone to overfitting and unrealistic assumptions.

- Walk-Forward Analysis: Rolling method, tests on unseen data, reduces overfitting, better for changing conditions, but slower and resource-intensive.

Quick Comparison:

| Feature | Backtesting | Walk-Forward Analysis |

|---|---|---|

| Methodology | Static, single optimization | Rolling, dynamic optimization |

| Overfitting Risk | High | Low |

| Data Usage | 100% for optimization | Split into training/testing |

| Computational Cost | Low | High |

| Realism | Low | High |

Use backtesting to filter ideas quickly, then apply walk-forward analysis to validate strategies for live betting. Together, they create a more reliable process.

Walk-Forward Analysis vs Backtesting: Complete Comparison Chart

Walk Forward Optimization in Python with Backtesting.py

What is Backtesting?

Backtesting involves testing a betting strategy against historical data to evaluate how it might have performed in the past. Essentially, you take a model - built on statistical trends or specific situational factors - and apply it to past games to determine if it has potential. The idea is simple: assess the strategy's effectiveness before risking any actual money.

The process is appealing because of the sheer availability of historical data. With modern tools, you can simulate thousands of bets in minutes, calculate theoretical ROI, and tweak your approach - all without spending a dime. But while it sounds foolproof, backtesting has its flaws, and even seasoned bettors can be misled by its limitations.

How Backtesting Works

Backtesting typically follows a step-by-step process. First, you define your strategy with clear rules. For example, you might decide to bet on home underdogs in specific matchups. Then, you collect historical data - this could include game results, opening and closing lines, weather conditions, and injury reports over a chosen period.

Next, you simulate bets by applying your strategy to each game that fits your criteria, tracking wins and losses based on historical outcomes and odds. Finally, you analyze performance metrics like ROI, win rate, or risk-adjusted measures such as the Sharpe ratio to see how your strategy stacks up.

This process relies on static parameters optimized for the entire historical period. It assumes that the patterns seen in the past will continue into the future. While efficient, this assumption introduces some key risks.

Limitations of Backtesting

One of the biggest challenges with backtesting is overfitting, where your model picks up on random noise or anomalies in the data instead of genuine trends. This can lead to a strategy that looks great on paper but falls apart when applied in real-world scenarios.

Another issue is look-ahead bias, which happens when the model uses information that wouldn’t have been available at the time of the bet - like closing lines, final scores, or last-minute injury updates. This makes the results seem far better than they realistically would be.

Backtesting also often overlooks the impact of market friction - the real-world costs that eat into profits. These include:

- The bookmaker’s vig (a profit margin typically around 4.5–5% on standard lines)

- Slippage, where odds change before you can place your bet

- Liquidity limits, which can prevent you from betting at optimal odds

Even a strategy with a small theoretical edge can become unprofitable once these factors are factored in.

| Limitation | Description | Impact on Betting Model |

|---|---|---|

| Vig/Commission | Bookmaker's profit margin | Can turn a theoretical edge into a net loss |

| Slippage | Difference between expected and actual odds | Reduces ROI as the "best" odds may no longer be available |

| Look-ahead Bias | Using future data in training | Creates overly optimistic historical results |

| Market Regime Shift | Market evolution over time | Causes model performance to deteriorate as conditions change |

Another critical factor is that sports betting markets are non-stationary - constantly changing due to new rules, team strategies, and growing market efficiency. For instance, a strategy that works one season might fail in the next as teams adapt and conditions shift. Backtesting assumes that historical trends will hold steady, but in live betting, this is rarely the case.

What is Walk-Forward Analysis?

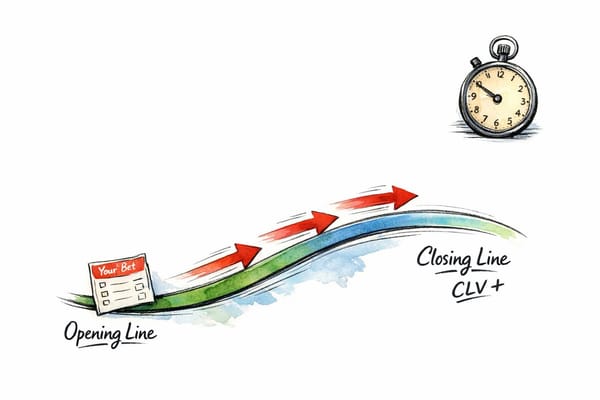

Walk-forward analysis (WFA) is a dynamic way to test betting strategies by continuously training on historical data and then testing on new, unseen periods. Unlike traditional backtesting, which applies a single optimization across all data, WFA mirrors real-world betting. Bettors adjust their strategies based on recent results, make bets on upcoming games, and then recalibrate as new data becomes available.

The standout feature of WFA is its rolling approach. After completing one test segment, the time windows shift forward - often by the length of the test period - and the process starts again. This creates several independent validation cycles, forcing the strategy to prove its effectiveness on data it hasn’t encountered before. It’s a significant shift from the static "fit once, test once" method, which often leads to curve-fitting.

"Walk-forward optimization (WFO) is a backtesting methodology that evaluates trading strategies by repeatedly training on a historical window and testing on the immediately following out-of-sample period... It mimics the actual deployment cycle." - PapersWithBacktest

To gauge a strategy’s reliability, analysts use Walk-Forward Efficiency (WFE). This metric compares annualized returns from out-of-sample data to those from in-sample data. A WFE score above 50-60% indicates that the strategy retains at least half of its optimized performance on unseen data, suggesting it’s not just overfitted. The concept was first introduced by Robert E. Pardo in 1992.

How Walk-Forward Analysis Works

The process is straightforward but computationally intensive. First, you divide your historical data into rolling time periods. A typical setup might use 2–4 years of data for optimization (called the "in-sample" window) and 3–6 months for testing (the "out-of-sample" window). This balance ensures enough history for training while keeping test periods realistic.

Next, you optimize the strategy parameters within the training window, finding the best settings for that specific period. Then, you apply these parameters to the out-of-sample segment that immediately follows, simulating real-time betting without foreknowledge of future outcomes.

After testing, you shift the time windows forward. The out-of-sample data becomes part of the training set, and the strategy is re-optimized before being tested on the next unseen segment. This cycle is repeated across the entire dataset.

For example, a 2025 technical guide used Apple Inc. (AAPL) stock data from 2010 to 2025. A strategy optimized statically through 2024 showed a -16.5% loss when applied to early 2025. However, implementing Walk-Forward Optimization with a 752-day training period and a 72-day re-optimization interval yielded a 55% positive return, demonstrating the adaptability of WFA.

To avoid over-optimization, it’s recommended to allocate 70–80% of the data for training and 20–30% for validation. This iterative process lays the groundwork for several key advantages.

Benefits of Walk-Forward Analysis

Walk-forward analysis addresses the shortcomings of static backtesting by requiring strategies to succeed across multiple independent periods instead of relying on a single historical dataset.

One major advantage is reducing overfitting. By testing on multiple independent periods, WFA prevents strategies from merely memorizing historical noise or one-off patterns that won’t recur. Each out-of-sample test acts as a reality check, revealing whether the strategy has genuine predictive ability or is just over-optimized for a specific dataset.

Another benefit is its ability to adapt to changing conditions. Betting markets are constantly evolving - team strategies shift, rules change, and market dynamics improve over time. WFA’s rolling window approach allows strategies to adapt to these shifts by continuously incorporating the latest data into the training set. This is far more realistic than assuming a strategy optimized in 2020 will still work seamlessly in 2026. For more sports betting insights and evolving market analysis, staying updated with the latest data is crucial.

In a study conducted in January 2026, Nicolae Filip Stanciu compared a Simple Moving Average (SMA) crossover strategy using SPY (S&P 500 ETF) data over five years. While traditional backtesting suggested profitability, WFA exposed parameter instability that would have led to losses in live trading.

Lastly, WFA provides more reliable performance estimates. Since every test is conducted on unseen data, the results are much closer to what you’d experience in real-world betting. This makes WFA a more dependable tool for evaluating whether a strategy can succeed when actual money is at stake.

Key Differences Between Walk-Forward Analysis and Backtesting

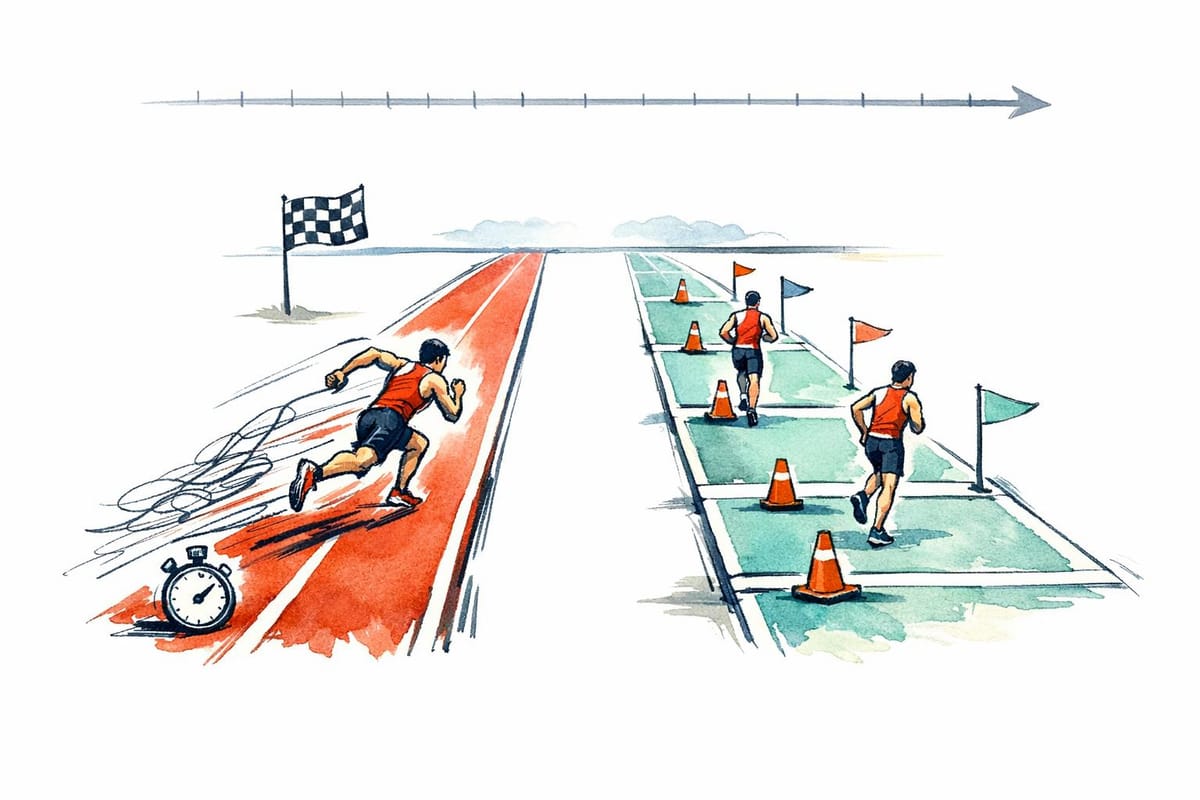

The main differences between walk-forward analysis and traditional backtesting lie in how they handle data and optimize parameters. Backtesting takes a static approach, identifying a single "best" set of parameters and applying it across the entire historical dataset. Walk-forward analysis, on the other hand, is more dynamic, constantly re-optimizing parameters based on recent data and testing them on unseen segments.

Another key distinction is how data is used. Backtesting typically uses all available historical data for optimization, effectively giving the model access to the entire dataset before testing. Walk-forward analysis splits data into separate training and validation segments, often allocating 70–80% for training and 20–30% for validation, ensuring the model does not "see" the validation data during training.

"Traditional backtesting creates a single, static view of strategy performance... When traders optimize parameters to maximize returns on this full dataset, they're essentially peeking at the answers before taking the test."

Overfitting risk is another major difference. Backtesting is prone to curve-fitting, where strategies are tailored to historical noise rather than genuine market patterns. For example, a trader testing an EMA crossover strategy from 2013–2023 discovered a Sharpe Ratio of 3.0 using backtesting. However, walk-forward analysis, using a 2-year training window and a 6-month test window, revealed a more realistic Sharpe Ratio of 0.9. This was due to shifting optimal parameters over time (e.g., 10/25 in 2015 versus 25/60 in 2020). Walk-forward analysis acts as a safeguard by requiring strategies to prove themselves on unseen data.

Computational requirements further separate the two. Backtesting is quick and efficient, often taking just minutes to run. In contrast, walk-forward analysis involves multiple rounds of optimization, which can extend processing times to hours or even days. For instance, a 10-year test with monthly windows might require 120 optimization cycles, compared to just one in backtesting. This makes backtesting useful for quickly discarding unviable ideas, while walk-forward analysis is better suited for validating the durability of promising strategies.

Comparison Table: Walk-Forward vs. Backtesting

| Feature | Traditional Backtesting | Walk-Forward Analysis |

|---|---|---|

| Methodology | Static; single historical replay | Adaptive; rolling optimization and testing |

| Data Usage | Uses 100% of data for optimization | Segments data into In-Sample and Out-of-Sample |

| Overfitting Risk | High (Curve fitting) | Low (Protects against noise) |

| Computational Cost | Low; fast execution | High; requires multiple optimization cycles |

| Realism | Low; assumes static market conditions | High; reflects re-optimization in live markets |

| Primary Goal | Strategy evaluation and ROI | Robustness and parameter stability |

This table highlights the key contrasts, showing how each method serves different purposes in strategy development and validation.

When to Use Walk-Forward Analysis vs. Backtesting

Choosing between backtesting and walk-forward analysis depends on the complexity of your strategy and how much the sports markets you're working with tend to change over time.

Start with backtesting. This method is ideal for quickly filtering out weak ideas and experimenting with parameter ranges. For example, if you're testing a straightforward system - like betting on home underdogs during specific weather conditions - backtesting can validate your concept efficiently. It's a great way to lay the groundwork during the early stages of strategy development.

Switch to walk-forward analysis when dealing with more complex strategies, especially those involving numerous parameters or machine learning models that risk being overfitted to historical data. Sports betting markets are constantly evolving, with factors like roster changes, coaching adjustments, and rule updates reshaping the landscape. A strategy that performs well in the past might falter under new conditions. Walk-forward analysis helps address this by regularly re-optimizing your strategy as fresh data becomes available, simulating how it would perform in live, shifting markets.

A solid validation process combines multiple steps: start with backtesting to refine ideas, move to walk-forward analysis for stress-testing robustness, and finalize with paper trading to confirm results. For most systematic strategies, using optimization windows of 2–4 years alongside validation periods of 3–6 months strikes a good balance between leveraging historical data and staying relevant to current trends. A Walk-Forward Efficiency (WFE) score - calculated as the ratio of out-of-sample returns to in-sample returns - above 50–60% often signals a strategy worth pursuing.

Using AI Tools for Better Testing

AI can streamline and enhance this layered approach to testing.

Take WagerProof, for example. This platform automates the process of strategy testing and validation. Its AI-powered research agents run continuously, analyzing sports data, matchups, and generating picks using over 50 adjustable parameters. Essentially, it performs ongoing walk-forward analysis for you. Meanwhile, WagerBot Chat taps into live professional data, integrating factors like weather, odds, injury updates, and predictive models into a detailed, multi-step evaluation.

WagerProof also identifies outliers and value bets, alerting you when market spreads deviate from expected norms or when models detect potential advantages. Importantly, it doesn’t just tell you a bet has value - it explains why. This transparency helps you avoid overfitting by providing clear insights into the reasoning behind each recommendation. Instead of manually running 120 optimization passes for a 10-year test with monthly windows, platforms like this handle the heavy lifting, leaving you free to interpret results and refine your strategy.

Pros and Cons of Both Methods

Choosing the right testing method means weighing the strengths and weaknesses of each approach. Here's a closer look at the benefits and challenges of traditional backtesting versus walk-forward analysis, presented side by side for clarity.

Backtesting: Pros and Cons

Backtesting shines when it comes to speed and simplicity. It allows you to quickly evaluate years of sports betting data and test multiple ideas without risking actual capital. This makes it an excellent choice for the early stages of strategy development, where the goal is to eliminate weak concepts efficiently.

That said, its static nature is a major limitation. Backtesting assumes that past conditions will persist, ignoring critical factors like market changes, roster adjustments, or rule updates. This can lead to overconfidence, as strategies fine-tuned to historical data often fail when applied to live scenarios.

| Advantages | Disadvantages |

|---|---|

| Processes years of data in hours | Relies on static parameters, ignoring market changes |

| Low computational demands and easy to implement | High risk of overfitting to historical noise |

| Ideal for quickly testing and filtering ideas | May create false confidence due to historical overfitting |

Walk-Forward Analysis: Pros and Cons

Walk-forward analysis addresses many of the shortcomings of backtesting by offering dynamic validation. It minimizes overfitting by requiring strategies to perform well on unseen data segments, mimicking real-world cycles of optimization and deployment. This method is better suited to adapting to evolving market conditions and includes the Walk-Forward Efficiency (WFE) metric, which helps gauge strategy reliability - a score above 50–60% is often a good indicator of strength.

However, this approach demands significant computational resources. For instance, testing a 10-year period with monthly windows requires 120 separate optimization runs, turning a quick task into a multi-day process. Additionally, results can be highly sensitive to the chosen window sizes, and there's a risk of over-optimizing the process itself.

| Advantages | Disadvantages |

|---|---|

| Adapts well to changing market conditions | Requires substantial time and computing power |

| Reduces overfitting risks significantly | Results depend heavily on window size choices |

| Simulates real-world optimization cycles | Reactive approach may lag behind sudden changes |

| Offers WFE metric to measure strategy reliability | Potential for over-optimization in the process |

Conclusion

Backtesting and walk-forward analysis are both critical for validating sports betting strategies, but relying on just one can lead to incomplete or misleading results. Backtesting shines in its ability to quickly evaluate ideas, allowing you to sift through years of data to identify potential opportunities. However, it comes with a major pitfall: excessive optimization. Research has shown that even random strategies can seem profitable in backtests if overfitted to historical data. This issue, known as curve fitting, occurs when a model captures noise instead of uncovering genuine, repeatable patterns.

Walk-forward analysis helps mitigate this issue by testing strategies on unseen data repeatedly. As one expert explains, "Traditional backtesting provides an overly optimistic, static view of performance... WFO, conversely, imposes realism by forcing the strategy to prove its mettle on unseen data segments repeatedly". The difference can be stark. For example, a trader’s EMA crossover strategy appeared highly successful in traditional backtesting, boasting a Sharpe Ratio of 3.0 over 10 years (2013-2023). However, walk-forward optimization revealed a much lower compound Sharpe Ratio of 0.9.

The best results come from combining both methods. Start with backtesting to eliminate weak concepts, then apply walk-forward analysis to stress-test the strategy across varying conditions. Finally, hold out 10-20% of your data as a completely untouched sample for final validation. A 70/30 split - 70% for optimization and 30% for validation - is a practical setup for these tests.

AI tools can streamline this entire process. Platforms like WagerProof simplify access to professional-grade betting data, historical stats, and advanced models. Their AI research agents continuously analyze matchups and crunch data, while WagerBot Chat integrates live data sources - such as weather, odds, injury reports, and predictions - into cohesive recommendations. This eliminates the data leakage risks often associated with manual testing, ensuring more reliable results.

FAQs

How do I choose the best walk-forward window sizes?

When selecting walk-forward window sizes, aim to balance in-sample (training) and out-of-sample (testing) periods to align with your trading goals. A typical approach involves using 6 to 12 months for the in-sample period and 1 to 3 months for the out-of-sample period. However, these durations can vary depending on your data and strategy specifics.

It's important to test multiple configurations, validate the results thoroughly, and fine-tune the setup. This ensures your model performs well under various market conditions while minimizing the risk of overfitting.

What’s the simplest way to avoid look-ahead bias in tests?

To steer clear of look-ahead bias, it's crucial to base decisions solely on the information that was available at the moment the decision was made. Strategies should be tested in chronological order, using a mix of in-sample and out-of-sample data. This means training models on historical data and evaluating them on future data that the model hasn’t seen. By sticking to this timeline, you can avoid letting future data influence decisions, ensuring results that are both realistic and unbiased.

What WFE level is good enough to bet live?

A Walk-Forward Evaluation (WFE) level of approximately 89% is often regarded as adequate for live betting. This metric shows that the strategy has been thoroughly tested, helping to minimize the chances of overfitting and making it more dependable under actual market conditions.